Radiologists are medical detectives who analyze data produced from X-rays and MRIs. They do not operate scanners; instead, radiologists sit in dark rooms analyzing numerous “slices” of patients. For example, a patient’s scan could look like a puzzle to the radiologist, so radiologists have to solve this type of puzzle continuously throughout a typical day/shift.

There are no physical limitations to how much of anything a very skilled professional can accomplish. However, as humans, radiologists will develop a mental state called perceptual fatigue while looking at an image for hours. This would be similar to finding one individual in a picture of many; it takes time to view the entire picture before you begin to get tired of it. There has been extensive research showing that perceptual fatigue is the primary cause of errors in interpreting medical images. It was not because the radiologist lacked training or knowledge about medical imaging. Instead, it was because the radiologist had to interpret too much information through their brain.

The ultimate goal of implementing AI in medical imaging analysis is not to eliminate radiologists entirely but to help them manage their daily workload. The intention is for the AI to be a radiologist’s perpetual assistant that analyzes all of a patient’s images prior to the radiologist viewing those images, and helps identify minor changes that might have gone unnoticed by a tired radiologist at the end of their day, therefore allowing the radiologist to focus solely on providing the best possible care to their patient.

AI in medical imaging: Smarter scans with AI

Deep Learning — especially within Machine Learning — uses deep learning techniques to evaluate and analyze Medical Imaging Data — specifically from X-rays, Computed Tomography (CT) scans, Magnetic Resonance Imaging (MRI) scans, Ultrasound Images, and Mammograms.

#AI for Personalized Treatment: Breakthrough Care and How It Works

Machine learning-based systems use these medical image analysis techniques to recognize trends and patterns in large volumes of labeled medical images, thereby training the model. These systems will compare the predictions made by the machine learning-based system with those annotated by physicians (e.g., “lung nodule” or “no bleeding”) to fine-tune the model and improve its accuracy.

After training, this model can analyze an image to identify potentially problematic areas, determine specific anatomical structures, and possibly report clinical findings.

As in radiology, AI technology is also being utilized as a “second viewer.” The AI technology will rapidly scan images for emergent conditions, such as a potential stroke or internal hemorrhage. It then delivers those images to the radiologist first. Additionally, AI technology flags areas in an image that may show subtle lesions a radiologist might miss. Also, AI technology can handle many of the mundane tasks involved in radiology, including organ segmentation, bone age determination, and monitoring of tumors over time. The use of AI technology in radiology offers several advantages, including more consistent results, reduced hours worked by radiologists, and faster patient turnaround times. These advantages are best realized in high-volume environments.

The AI does not understand images like a physician would. Instead, the AI locates statistically significant patterns within images; thus, the AI’s success depends on the quality of the images and on the similarity between the population from which the images were taken and the population used to develop/train the model. Differences among hospitals or MRI scanners, as well as differences in protocols, can also affect models. High numbers of false positives could increase the workload for clinicians who need to follow up on them, while high numbers of false negatives may put patients at risk because clinicians rely on the tool.

As a result of this risk, numerous clinical validation studies are conducted on many AI-based medical imaging tools once they are deployed. Many of these tools are also monitored post-deployment. While some of these tools are designed for use solely by clinicians, many are classified as medical devices. Therefore, all medical device classifications require compliance with regulations regarding their safe use and performance. Today’s most successful strategy for utilizing AI technology in medical imaging is collaboration. The AI performs rapid processing/identifies trends in images, while the clinician adds context to make decisions/judgments and accepts liability.

So, What Is “Medical Imaging AI” in Simple Terms?

When we talk about AI, we usually think of depictions of robots from films. But medical imaging analysis does not have the capability to think or understand what a lung is. Medical Imaging Analysis is nothing but highly developed software designed to perform one singular action: identify patterns in Images. While, yes, there is incredible power in that kind of software, it is completely misleading to say that it understands (in other words, has a ‘thought’). This is best seen as a superpowered magnifying glass used to help identify a very particular detail in an image.

The AI Tool acts as an Endless Assistant to help the Radiologist (the doctor who reads your scan). There’s a computer “Find” feature that lets you locate a single word in an extremely large document. Prior to showing the Radiologist the Image, the AI first reviews it and identifies minute patterns that could easily go unnoticed by the human eye. Another pair of eyes that never gets fatigued and allows the radiologist to concentrate solely on the most important parts of the Image.

Unlike Generalist AIs (such as Siri), Diagnostic AIs are Specialized. An example of this would be an AI that detects small fractures on an X-ray. It cannot find Pneumonia in a Lung Scan. Each Diagnostic AI was trained to be an Expert at Identifying One Thing by reviewing Millions of examples of Images with the One Thing They Were Trained To Find. That’s why they’re so good.

At-a-Glance: What AI Actually Does in Medical Imaging

| Task | What AI Does | Why it Matters |

|---|---|---|

| Detection | Finds abnormalities | Saves time |

| Segmentation | Highlights organs/tissues | precision |

| Classification | Labels disease types | Faster diagnosis |

| Prediction | Forecasts disease risk | Preventive care |

Source:

Medical Imaging Technology: Modern MRI, CT, and X-ray tools

Medical Imaging Technology is the use of diagnostic tools to create pictures of the inside of the body. These images allow clinicians to diagnose diseases and develop treatment options; they also provide information to guide procedures and monitor recovery. There are several types of imaging technologies, with four being the most commonly used: X-ray, Computed Tomography (CT), Magnetic Resonance Imaging (MRI), Ultrasound, and Nuclear Medicine (PET and SPECT).

The first medical imaging modality was the X-ray. An X-ray creates an image of dense structures in the body using low levels of ionizing radiation. It is primarily useful when visualizing bone or lung issues. A CT scanner (also known as a CAT scanner) is essentially an advanced version of an X-ray machine. While a traditional X-ray generates just one layer at a time, a CT scanner can acquire multiple layers and then combine them into a three-dimensional image of an organ, blood vessel, or traumatic injury.

Although CT scanners do offer a much greater level of detail regarding internal organs and trauma compared to X-rays, they require significantly more radiation to achieve those same levels of quality. For this reason, clinicians use various dose-reduction strategies to limit their patients’ radiation exposure while still obtaining high-quality images.

MRI creates images of soft tissues in the body — including the brain, spinal cord, muscles, and joints — by combining strong magnetic fields with radio waves, instead of ionizing radiation. Each MRI sequence has been developed to analyze the characteristics of each tissue type; this allows for high-quality imaging of tumors, inflammatory processes, and stroke.

Ultrasound uses sound waves to create images of the body’s internal structures. Due to its low cost, ease of use, and lack of ionizing radiation, ultrasound has become one of the most portable imaging modalities available. Examples include using ultrasound to assess fetal development during pregnancy, creating echocardiographic images of the heart, and assessing the function of abdominal organs and blood flow.

Nuclear Medicine creates images that demonstrate how the body functions, using a small amount of radioactive material known as a “tracer”. The tracer is administered intravenously, and the Nuclear Medicine Scanner determines how much and where it accumulates throughout the body. This information is useful for determining whether an organ is functioning properly, whether there is evidence of cancer metastasis, whether the heart is being adequately supplied with blood (perfusion), and/or whether there is abnormal metabolic activity.

Medical Imaging Technologies today provide high-quality images and utilize advanced digital storage and communications (PACS), standardized image formats (DICOM), software to produce 3D reconstructions and software to measure object size and location on images. Emerging trends in Medical Imaging Technology include addressing concerns about radiation exposure from scans, reducing scan times, improving device mobility, and applying Artificial Intelligence to improve the speed and accuracy of clinical decision-making.

#Transformative AI for Clinical Decision: Empowering Doctors to Think Faster

How Do You Teach a Computer to Read a Medical Scan?

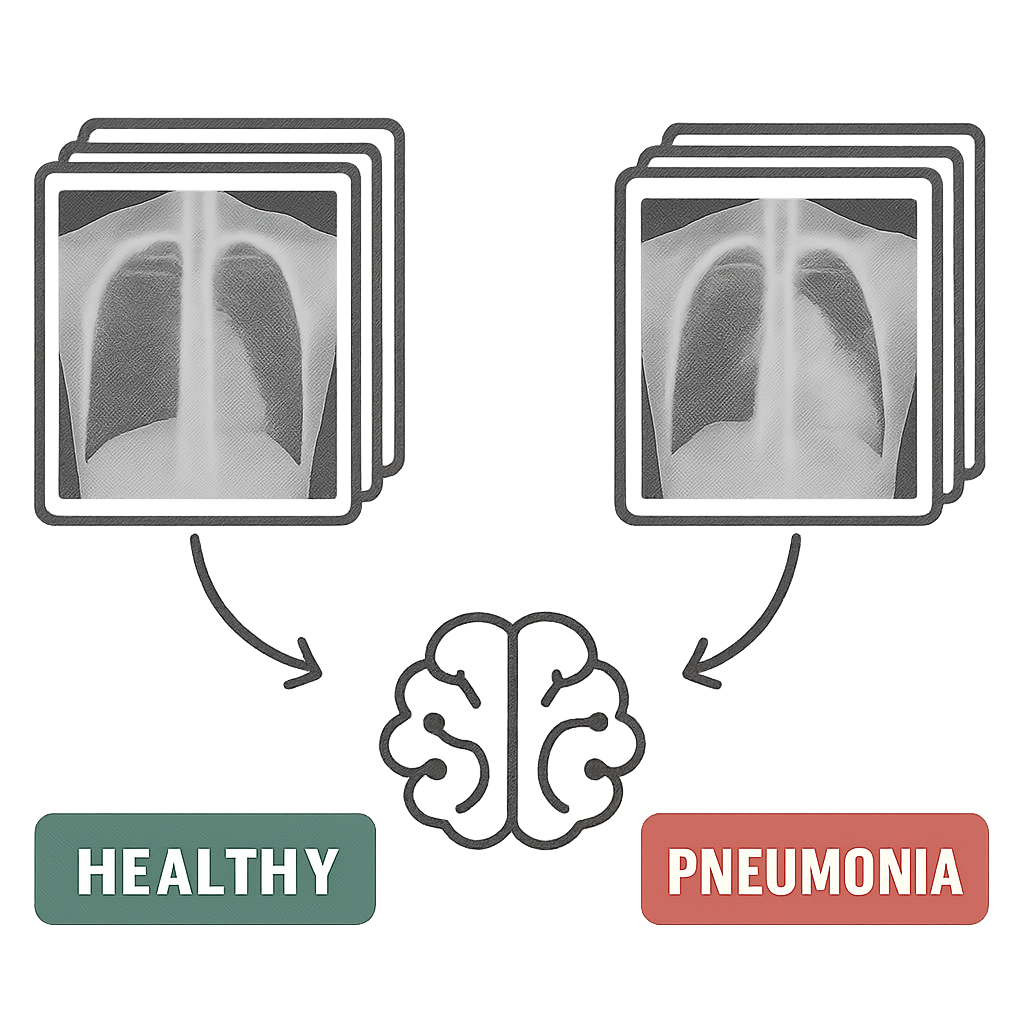

Medical students are not given textbooks and expected to become medical professionals. While using AI to examine scans is like handing a student a large deck of flash cards, the AI’s training methodology (just like all methodologies) relies upon the system being trained having access to a substantial library of examples. Thus, the program was not developed with a set of predefined criteria to evaluate whether a chest X-ray indicates pneumonia.

Rather, the AI uses its own ability to discover patterns in the data to develop its own definition of pneumonia. The images used to train the AI are not chosen randomly. Rather, the images were already categorized as either “diseased” or “healthy.” Each of those images was evaluated by a human expert who provided a classification and labeling for each image. A group of one million chest X-rays identified as “disease” and another million identified as “healthy,” each with a corresponding label (“pneumonia” or “healthy”), would constitute a dataset known as Labeled Data. That labeled dataset is actually the course material that the AI will follow when studying.

By looking at and comparing millions of labeled images, the AI identifies relationships among them. In doing so, the AI develops a connection among the images and comes to understand how subtle differences in texture, shadows, and shapes relate to all images that contain “pneumonia,” while none of those characteristics exist within any images classified as “healthy”. Any individual human can not identify those connections, allowing the AI to benefit from considerably more information than any physician could observe throughout his or her career.

Once the AI has studied through such a massive body of data, it becomes highly skilled at identifying visual clues associated with various diseases. Like physicians, AI systems do not think as humans do; yet, both have been trained to recognize the same visual clues that physicians rely upon when making diagnoses. Because AI can become highly specialized, it can recognize numerous unusual visual details (such as finding a needle in a haystack or even a tiny crack in an X-ray).

Behind the Scenes: How AI Reads a Scan

| Stage | What Happens | Result |

|---|---|---|

| Data Insput | MRI/X-ray images uploaded | Raw scan |

| Preprocessing | Noise removal, normalization | Clean data |

| Feature Extraction | Patterns dtected | Key signals |

| Model Prediction | AI identifies issue | Diagnosis suggestion |

| Output | Visual + report | Doctor review |

Source:

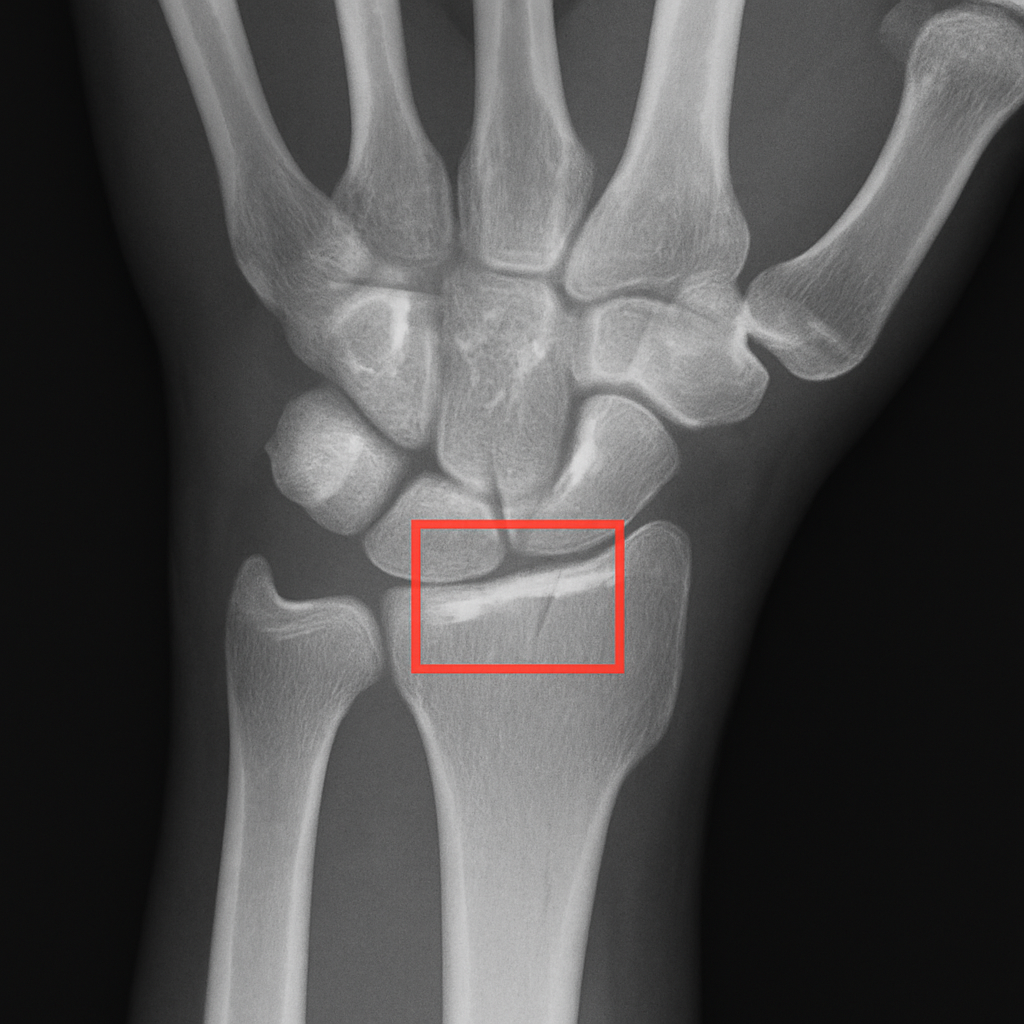

Finding the Needle in a Haystack: How AI Spots Tiny Fractures

There are many X-rays from a single examination, and finding a very small crack in all these dozens of X-rays may be difficult. Additionally, radiologists must view many images each day, creating a large backlog of exams that prevents them from quickly determining whether a bone is fractured. Therefore, having two sets of very fast “eyes” should greatly improve this problem.

In addition to providing a second set of “eyes,” AI will also greatly assist in identifying fractures and preventing medical imaging errors by rapidly reviewing a patient’s X-ray or CT scan within seconds, then sending the case directly to the radiologist if AI indicates possible fracture indicators. This is essentially allowing the radiologist to evaluate only the potentially serious cases, while the less serious cases will be evaluated later.

Another way that AI can assist in rapid and accurate fracture diagnosis is by being able to recognize patterns of injury that cannot be seen by human “eyes.” While this technology offers great promise in assisting in the recognition of the beginning stages of a fractured bone, its application in detecting early cancer may offer an even greater benefit. For example, instead of viewing an X-ray to search for a small crack in an individual’s bones, AI could use pattern recognition to identify the earliest signs of disease in an X-ray. The implications of such applications will undoubtedly have significant benefits to patients.”

The Power of a Second Look: AI and Early Cancer Detection

That same ability to detect even the slightest anomaly in an existing sequence or pattern will also serve as a life-saving tool when utilizing AI to diagnose early-stage cancer. For instance, on a mammogram, the very small amounts of abnormal cellular growth that can signal the beginning stages of breast cancer appear almost indistinguishable from the dense normal tissue. The Radiologist is then left to wade through the many complexities of mammography images to find and identify minute differences. In doing so, if the Radiologist misses something, it may lead to a delay in their diagnosis; therefore, having another pair of eyes with heightened awareness would greatly benefit.

#Revolutionary AI in Hospitals – From Life-Saving Diagnosis to Intelligent Delivery Robots

It’s not just about being able to quickly diagnose when there is a broken bone; it’s about identifying a new opportunity. The same way that AI uses pattern recognition to look at your mammogram for early signs of breast cancer, this means looking at the smallest possible indicator (a small break) as the first sign of disease.

The doctor receives a rich visual tool to support their evaluation of the mammogram, rather than an automatic “yes” or “no” based solely on the AI. The physician receives a visual representation of where the AI believes there is something abnormal, in the form of a heat map that could be conceptualized as a weather map overlaid on top of the mammogram.

The areas the physician should focus on will be highlighted on the heat map by the AI in bright red and yellow. These are the same colors used to highlight severe weather (storms). A physician would still need to make their own decision about whether the area appears suspicious enough to warrant further investigation. In other words, the AI is meant to assist the physician by serving as a highly trained “pointer,” drawing attention to areas the physician needs to examine more closely.

Physicians are using AI to create a safety net. The inclusion of AI technology has provided the capability of a “second read.” Physicians can now perform multiple reviews of the same mammogram, which can help reduce errors in reading the images. Studies have demonstrated that physicians using AI tools can detect more cancers than those without access to such tools.

Real Case Snapshot: AI Detecting Cancer Earlier

| Case: | Breast Cancer Detection (Google Health) |

|---|---|

| AI model analyzed mammograms | |

| Reduced false positives by 5.7% (US) | |

| Reduced false negatives by 9.4% | |

| Impact: | Earlier detection |

| Fewer unnecessary biopsies |

Source:

Seeing the Invisible: How AI Helps Analyze Lung and Brain Scans

A mammogram takes an image of your body, whereas MRI and CT scan technology take thousands of thin pictures of you and combine them into a 3D model. With these models, a physician will look at each picture individually; however, AI systems can analyze multiple pictures of you at once, creating a 3D representation that no human eye can visualize. The complexity of viewing organs such as the brain or lungs is also enhanced by this method.

The additional benefit of being able to see the entire organ allows AI systems to measure how much of the organ has been damaged/affected more accurately than a human could visually estimate. For example, when treating a patient with pneumonia, a doctor would want to know what percentage of the patient’s lungs was infected.

Instead of relying on visual assessment to determine the extent of lung involvement, AI algorithms using lung CT scans can objectively quantify the percentage of lung area affected. In like manner, being able to objectively assess organ function will be critical to evaluating the effectiveness of cancer treatments, especially for monitoring tumors that are shrinking or growing after treatment.

AI trained on MRI images can objectively evaluate whether a tumor has changed in size after treatment, and similarly, AI can use advanced computer vision in Digital Pathology and Radiology to provide objective data to support a physician’s clinical judgment regarding the efficacy of treatment. As an adjunctive tool to a physician’s clinical expertise and training, the AI provides objective, fact-based (measurable) data to aid in the physician’s evaluation process.

Rather than simply identifying a problem and determining whether it is worsening, the physician can now use quantifiable data to monitor the patient’s improvement. I am not answering your question – I simply restated the text you provided.

Image Analysis AI: that Reads Images

Computer Vision AI uses various algorithms to analyze images. A person would need to review every single image, but with AI, you could have your computer analyze each one and determine what is happening in it. For instance, your computer could be analyzing images taken by a satellite or drone, or by a camera. This type of technology has applications in numerous areas, such as medicine (medical imaging), the military (surveillance), manufacturers (quality control), retailers (inventory tracking), farmers (crop monitoring), and remote sensing (satellite imagery).

Most types of computer vision today are developed utilizing one form of Deep Learning called Convolutional Neural Networks (CNN) and now more often than before, Vision Transformers. Deep Learning models utilize example learning. Examples are given to the model while it trains. The model learns from those examples. During the training phase, the model views a vast amount of images along with their labeled counterparts (i.e. crack, tumor, cat, flood). Based on this information, the model adjusts its internal parameters so that it minimizes the errors. Once trained, the model will be able to classify images, make predictions about future events, provide confidence levels regarding their answers, and create pixel-level maps for new images.

Common tasks involve:

- Classification: determining what you see in an image (normal/abnormal).

- Detection: drawing a box around a specific object (e.g., a lesion).

- Segmentation: defining the exact edges of an area (organ).

- Measurement/Tracking: measuring how large something is, or how it changes with time.

- Anomaly Detection: identifying unusual image characteristics when there are no images to train from.

Good performance requires that images be of good quality, and so is the quality of the training data. There could be major differences in the types of views or cameras used and the lighting during training, and there could also be major differences in the demographics of patients in the training data compared with those typically seen in everyday practice. This can lead to decreased model accuracy. Teams that utilize these systems will have difficulty with “explanations” because most of these systems are considered “black boxes.” To provide some explanation of their systems, many teams have begun adding heatmap/attention map overlays to their system outputs to help explain how they reached their conclusions.

Generally speaking, the best outcomes occur when a team uses image-analysis AI with human review of the results. Image analysis AI can improve efficiency and speed through streamlining and eliminating redundant task processes. Also, AI’s ability to perform consistently helps improve efficiency. However, the human component provides the necessary perspective, decision-making, and accountability to determine whether a diagnosis is correct.

AI in Radiology: Faster, more accurate diagnoses

Radiology has become one of the largest fields for machine learning (deep learning), which is being used for image analysis, decision support, and workflow optimization with medical imaging exams. Radiology produces an enormous amount of information from many imaging modalities, including computed tomography (CT), ultrasound (US), magnetic resonance imaging (MRI), mammography, and others. Deep learning algorithms can be applied to the pattern recognition of all types of medical images produced by radiologic studies. Also, it can help prioritize acute studies and replace time-consuming tasks performed manually by radiologists.

Many people use computer-aided detection and triage to apply artificial intelligence. Artificial intelligence can highlight potential intracranial hemorrhages on head CT scans, large-vessel occlusions on stroke imaging, pneumothoraces on chest X-rays, and, potentially, suspicious lung nodules. These systems, when integrated into a clinical workflow, can prioritize important cases, moving them to the top of the reading list so emergency treatments can be provided sooner to critically ill patients.

Artificial intelligence enhances radiologists’ ability to consistently and accurately perform quantitative aspects of radiology and to provide better segmentation and measurement. For example, tools are available that can automatically identify and quantify organs or masses; measure the volume of structures or tumors; compare tumor sizes; determine patients’ skeletal age; and measure calcifications in the coronary arteries. This type of automation will allow radiologists to spend less time on these tasks and achieve greater consistency in their assessments when comparing images taken at different times.

AI will not be able to replace radiologists. There are two reasons why this is true. One, although an AI Model could have been “trained” using thousands of examples, it is still an example of Machine Learning. Therefore, it could possibly make mistakes based on how it was “trained”, based on variations in patient population, different types of scanners used, and/or scanner protocols.

Two, if there were false positives from the AI Model, then the radiologist would have to do more work to verify the result. However, if there were false negatives, the patient would rely solely on the AI Model for their diagnosis, which could be very dangerous. Due to these potential problems, most developers view the results generated by an AI Model as decision support only. The developer of the AI Model does not want the radiologist to lose their ability to diagnose patients accurately and develop the best possible treatment plans.

If you are going to successfully adopt and use AI-based radiology products, the developers need to complete extensive clinical validation and receive FDA approval as a Class II Medical Device. Also, they should consider integrating the new technology into their current PACS/RIS system(s), creating a simple-to-use interface so that end users are comfortable working with it, and implementing ongoing evaluation processes to detect and mitigate performance degradation over time.

The ultimate relationship between AI and radiology professionals needs to be collaboration instead of competition. AI provides faster processing, better pattern detection and higher measurement accuracy. On the other hand, radiologists will continue to provide critical judgment and oversee all aspects of a patient’s diagnosis and care.

Radiology Image Analysis: Smart Analysis of X-rays and MRIs

Radiology Image Analysis is the process by which radiologic images are evaluated to assess anatomical structures and to support a diagnosis of the abnormalities/diseases present within those structures. There are many imaging modalities used in radiology image analysis, including X-rays, Computed Tomography (CT), Magnetic Resonance Imaging (MRI), Ultrasonography, and Mammography. A radiologist uses all information from an image evaluation, along with other patient information (such as lab values/symptoms), to reach a conclusion and write a formal report on their findings.

Radiologists typically follow a structured approach to evaluate radiologic images. This includes: first, confirming that the proper patient and study were identified; second, assessing the image quality; third, evaluating the most critical parts of the body being studied (regional assessment); fourth, comparing the current images to previous studies done on the same patient; fifth, using standard terminology to document their findings.

In assessing radiologic images, radiologists look at signs indicative of diseases, including but not limited to masses, hemorrhage/fractures, inflammation/fluid collections/enlarged organs/tissue density/signal intensity differences, etc. In addition to identifying individual indicators of disease, radiologists also consider patterns present in the radiologic images. For example, patterns may include the distribution of pulmonary opacities/whether there is symmetry between the left and right sides/how tissues appear after contrast enhancement.

Measurements taken by a doctor during an evaluation of radiology images are highly important. A physician can measure lesion or organ size, the degree to which vessels narrow, tumor volume, and changes over time in cancer patients to determine whether their treatment is working. In critical emergencies such as acute stroke, pulmonary embolism, bleeding within the body, and bowel obstruction, rapid diagnosis can result in improved patient outcomes.

Currently, PACS (Picture Archiving and Communications Systems) stores images and provides windows into viewing them. Clinicians can now use a variety of window control options to help identify subtle differences between identical-looking structures and images. Utilizing multiplanar reconstruction and three-dimensional imaging allows clinicians to better understand complex anatomical structures. There has also been the development of many different types of artificial intelligence-assisted software applications to assist clinicians by identifying and segmenting tumors, highlighting regions of interest, quantifying measurements, etc., to support clinician decision-making.

The primary focus for radiologists regarding quality and safety issues includes: radiation exposure from computed tomography scans and x-ray examinations, risks associated with contrast media used in some diagnostic tests, and the risk of incidental findings.

#AI in Drug Discovery: A Breakthrough Approach to Faster and Smarter Drug Development

Where AI Is Already Changing Your Hospital Experience

| Area | AI Application | Benefit to Patient |

|---|---|---|

| Emergency Care | Fast Scan triage | Faster treatment |

| Oncology | Tumor detection | Early diagnosis |

| Neurology | Brain scan analysis | Stroke detection |

| Pulmonology | Lung scan AI | COVID & disease detection |

| orthopedics | Fracture detection | Accurate diagnosis |

Source:

Is AI Really More Accurate Than a Doctor?

You’ve probably heard: “Artificial Intelligence (AI) is superior to Doctors when it comes to diagnosis.” The hype that surrounds those studies can certainly be exciting. However, virtually all of these studies test an AI system for a specific, limited diagnostic function under tightly regulated conditions. Real-world clinical practice is far different. A lung cancer detection AI system may be unable to determine whether a fractured rib or pneumonia is present on the same Chest X-Ray as a Radiologist could.

This is where there is a significant distinction between how an AI system and a Radiologist accomplish the same task.

The AI System is like a super-smelling Dog with an extremely acute sense of smell that can identify a single odor better than most people can. The Doctor is similar to the lead detective in the investigation. While the Doctor (lead detective) will take into consideration everything that has been provided to him/her (symptoms, family medical history, etc.) to develop his/her theory, the AI (super-smelling Dog) was only trained to identify a part of the overall scene and has identified a singular area. Therefore, the super-smelling Dog’s singular focus on a very narrow aspect of interest is a valuable second clue for the lead detective to consider.

Therefore, the most successful outcomes are achieved not by battling “Man vs Machine” but by working together. Studies have shown that diagnostic accuracy for AI systems is greatly enhanced when they act as a “Second Reader”. As a Second Reader, the AI identifies potential locations that the human eye misses, while providing the Doctor with the necessary context to make a decision based on both the AI’s findings and the Doctor’s own knowledge.

In essence, we want to provide our Doctor with the best possible tools so that he/she can be the best possible Doctor.

Numbers That Matter: AI vs Human Performance

| AI accuracy in radiology taks: 90-95%+ |

|---|

| Average radiologist accuracy: 85-90% |

| AI reduces diagnosis time by up to 30-50% |

| AI-assisted workflows improve outcomes significantly |

Source:

Who Is in Charge? Your Doctor and Their “AI Co-Pilot”

It’s really crucial that you realize that your Doctor is ALWAYS in charge. A Radiologist and an Artificial Intelligence (AI) are colleagues, and the new relationship between them should be considered from the AI’s perspective as an extremely sophisticated “Co-Pilot.”

Consider a modern Commercial Airline Pilot. The plane’s Autopilot System will fly the flight plan, monitor all sorts of data streams, and can probably detect things that a person would never see in a matter of seconds. But the seasoned pilot has ultimate decision-making responsibility for the weather, including the ability to make emergency decisions to land safely. The AI is like the plane’s Autopilot System — it provides the doctor with a lot of help and support through data and analysis. Nonetheless, the doctor is still the Captain of your Medical Care.

The AI may identify minor or suspect anomalies on an MRI (or CT) scan at a Hospital that a busy physician wouldn’t have time to evaluate. At that point, the AI presents recommendations — not orders — for the doctor to examine, utilize his/her knowledge base and years of experience working with your specific personal health history, and decide if you do indeed have the condition(s) identified by the AI. Data comes from AI; Wisdom comes from Doctors.

Doctor vs AI vs Together

| Scenario | Doctor Alone | AI Alone | Doctor + AI |

|---|---|---|---|

| Complex diagnosis | Good | Moderate | Best |

| Speed | Moderate | Fast | Fast + accurate |

| Error rate | Medium | Medium | Lowest |

How Do We Know These AI Tools Are Safe and Effective?

Fortunately, they do not enter hospitals without a plan. In the United States, the FDA regulates these types of programs like all other types of medical devices. As such, the first step toward helping your physician use an artificial intelligence program would be to assess whether the product has been sufficiently evaluated to ensure it is safe and effective for use by the physician caring for their patients.

An example of how this works in the USA: FDA Approval Process for AI Medical Imaging Software

There are several steps involved in obtaining FDA approval for AI Medical Imaging Software. This process will vary depending on the type of software you plan to use when going to the doctor; however, after completing all necessary testing procedures, the AI must pass each test before being permitted to assist a physician in treating patients.

Testing at Multiple Levels Will Be Conducted Before Approval Can Be Granted. To validate the performance of the AI system, three levels of testing will occur prior to the granting of a clearance from the FDA

- Testing of Thousands of New Images Never Seen by the AI System: This level of testing is referred to as “extensive testing.” The AI system will be tested using thousands of new medical images that have never been viewed by it. The purpose of this extensive testing is to evaluate whether the AI system can correctly classify each image into one of the specific categories on which it was trained, without the need for human intervention. If successful, this would provide assurance that the AI system’s training resulted in the ability to accurately perform its intended function.

- Clinical Validation: Following Level I testing, the next step is to test the tool in a clinical or simulated clinical setting. In this stage, researchers compare how well the tool identifies disease with a physician’s accuracy. The results of this study are critical in establishing whether the tool provides valuable diagnostic information to the physician without confusing him/her.

- FDA Review: After completion of Level I and II testing, a thorough review of all of the data collected is conducted by a group of scientists, physicians, and engineers who work for the FDA. Once they have completed their review, they may approve the tool for use with patients.

Beyond Accuracy: How AI Makes a Doctor’s Job Better

The average number of medical images generated by a single hospital exceeds 1,000 per day. When there are so many images to review, a doctor can review each one as he/she would any other image, no matter how many are normal. This is another area where the use of Artificial Intelligence in the Health Care Process offers significant benefits because AI can very quickly sift through all the images (thereby allowing doctors to see only the images that are relevant to them, or complete measurements on common examinations), much like an administrative assistant completes the most time-consuming paperwork for doctors.

AI has provided doctors with an extremely effective tool for managing their workload and repetitive tasks they would otherwise have to complete themselves. By completing these repetitive tasks, it has reduced some of the fatigue that contributes to mistakes made in the interpretation of medical images. It has also allowed doctors to focus on the diagnostic puzzles and patient interactions that are the essence of being good doctors, rather than solely on the “next item” to be completed (the routine task list).

As doctors become more efficient in their practice and less fatigued by the burden of technology, they will provide higher-quality care to their patients.

What Are the Ethical Questions We Still Need to Answer?

To begin with, the development of the advanced health care technologies referenced above will raise serious questions about trust and safety. The reason is that AIs must have access to hundreds of thousands of prior medical scan images to carry out their intended functions. Therefore, a major concern is privacy. Researchers have addressed this problem by anonymizing the data, which means they remove all personally identifiable information (e.g., name, DOB, MRN) from the images, so that the AI learns from medical images alone rather than from any single person’s confidential files.

A second area that researchers must address is algorithmic bias. If the primary training database for an AI comprises data from only one particular population, then the AI may be less capable of effectively evaluating scans from patients outside that demographic group. Just like a student who studied only one book and failed his final exam, researchers are working to create AIs trained on large, varied databases of patient information to help ensure fairness and effectiveness for all users.

The final issue is that of equitable availability. Will these advanced diagnostic imaging systems be available only to larger, wealthier hospitals, thereby preventing smaller or rural hospitals from accessing them? Our overall objective is to create systems for providing diagnostic imaging capabilities to patients that are both cost-effective and easy to integrate into current practices; therefore, allowing a patient in a small community to obtain the same level of diagnostic services as a patient in a major city. Fortunately, these are not simply hypothetical issues.

Before an artificial intelligence (AI) imaging system can be used on patients, it must undergo rigorous testing and obtain regulatory approvals from government agencies, including, but not limited to, the U.S. Food & Drug Administration (FDA). By proactively identifying the ethical issues associated with the use of AI in diagnostic services, researchers and other health care professionals are laying the foundation for creating better health experiences for all people through the safe and effective use of the technology.

The Future Is Now: What’s Next for Medical AI?

In addition to supporting physicians in determining a patient’s current medical condition through today’s AI applications, the possibilities for the future of diagnostic AI are enormous. Next-generation systems will be capable of predicting and identifying illnesses before they occur, essentially providing an alert like a weather app that tells you it is going to rain tomorrow and warns you of a severe storm possibly occurring next week.

The predictive capabilities of these new systems stem from their ability to analyze numerous images. The future systems will review both your individual X-rays or MRIs independently, but will also examine the relationship between the images taken of your body and all of the other elements related to your “puzzle” (lab work, family medical history, genetics, etc.), allowing them to form a comprehensive view of your health. This comprehensive view allows the systems to build a larger, more complete, and ultimately predictive picture of your health as it evolves over time.

This is leading to significant paradigm shifts in the practice of medicine. Where previously the focus was on treating disease reactively after it develops, AI will now be used proactively to preserve health. For example, a future system would likely not use artificial intelligence to detect a cancerous tumor in the body once it has formed, but rather use your health data to assess whether you are at increased risk for developing a particular disease three or four years down the road. With that knowledge, you and your physician would have the option to implement lifestyle changes or initiate earlier treatment to alter the disease’s course and move towards wellness.

Your Empowered Role in the Future of Healthcare

What was once thought of as science fiction is now a real clinical resource. An AI will never replace your doctor. It will be a “tireless co-pilot”, having analyzed over 100 million scans to help a learned clinician identify the minuscule signs of illness. The collaboration between clinicians and AI will lead us to understand how AI will grow in diagnostic capabilities.

“When equipped with this knowledge, you will have greater faith in health care. With AI being part of the medical process for you, your family or loved ones, it is feasible to become a knowledgeable partner in the decision-making process and ask a very effective question: ‘How does AI help you in your work?'”

This one basic query changes the dialogue from an uncertain position to a cooperative relationship founded upon mutual respect and confidence.

Combining man’s expertise (human experience) with machine precision to make better use of AI to streamline healthcare workflows, we are moving towards a future where answers occur more rapidly, treatments begin sooner, and doctors spend less time staring at computer screens and more time doing what matters most — caring for their patients.