Today’s technology has created tremendous opportunities for artificial intelligence (AI) models. These models are creating innovations in every area, including voice assistants and predictive analytics. However, evaluating how well an AI model performs its intended task requires identifying specific metrics to measure its performance. In this article, we discuss the most commonly used metrics for evaluating a machine learning algorithm’s performance and creating benchmarks for comparing multiple algorithms.

To discuss individual metrics first, let us define the purpose of machine learning model evaluation. Machine learning model evaluation is performed to provide insight into how effectively a model performs and to determine whether it has accomplished the established goal. While accuracy is often a primary goal in developing a machine learning model, there is also a need to understand which aspects of a model perform well and which do poorly.

#How Chatbots Accurately Understand Human Language

Summary

Beyond evaluating whether a model performs as intended, evaluation is an ongoing activity that continually evolves as new data becomes available and/or the objective(s) shift. The constant and evolving nature of this evaluation, compared to static testing, requires continuous learning by the developer and adaptability within the AI system itself.

The Importance of Evaluation

Continuous evaluation will enable AI developers to understand how their product functions across different environments and adjust development accordingly, ultimately creating better products. Continuous evaluation will enable the AI developers to understand how their product functions across various environments and adapt their development strategy, thereby producing a higher-quality product.

Continuous evaluation will enable the AI developers to understand how their product works within different environments. Continuous evaluation will allow AI developers to understand how their product performs across various environments.

The Evaluation Process

Evaluation typically includes training a model on one dataset, testing on a second dataset, and assessing how well the model performs using various metrics. It also allows for the identification of problems related to fitting (overfitting or underfitting), which may undermine the model’s reliability. Dividing the available data into three sets (training, validation, and test) facilitates improved evaluation of the model’s ability to generalize.

Following preliminary training, there is often room for improvement through “model tuning” — adjusting hyperparameters; selecting characteristics/attributes/features from available information; modifying/adjusting the algorithm(s); etc. Evaluation provides feedback to enable refinement/model development and improved prediction performance.

AI Evaluation Workflow

| Step | Action | Outcome |

|---|---|---|

| Data Split | Train/test split | Fair evaluation |

| Model Training | Fit model | Learn patterns |

| Evaluation | Apply metrics | Measure performance |

| Validation | Cross-validation | Reduce bias |

| Optimization | Tune model | Improve results |

Common Challenges in Evaluation

While evaluation is required for most applications, there are significant challenges associated with the process, as they can also negatively impact accuracy and reliability. The two major challenges are choosing appropriate evaluation metrics to match an A.I. application’s goal(s), or failing to do so and consequently developing incorrect conclusions regarding a model’s performance.

In addition to selecting appropriate metrics, the second main challenge in evaluating AI models is navigating the complexities of real-world data. Real-world data is typically “messy” (as in it contains a lot of irrelevant or extraneous information) and “incomplete.” This situation makes it difficult to provide a fair assessment of how well a model performs under the conditions being evaluated.

#Understanding the Basics of Neural Networks: A Practical Guide

Essential Metrics for AI Model Evaluation

Finally, interpreting the evaluation results is critical to providing stakeholders with useful insight into what the metrics mean in terms of actual usage.

Classification Metrics Comparison

| Metric | Formula | Best Use Case | Limitation |

|---|---|---|---|

| Accuracy | (TP+TN)/Total | Balanced datasets | Misleading in imbalance |

| Precision | TP/(TP+FP) | Spam/fraud detection | Ignores FN |

| Recall | TP/(TP+FN) | Medical diagnosis | Ignores FP |

| F1 Score | Harmonic mean of P & R | Imbalanced data | Harder to interpret |

| ROC-AUC | Area under curve | Overall model quality | Needs probability scores |

Source:

Accuracy

Accuracy is one of the easiest-to-understand (and most popular) metrics that gives you an idea of how well your predictive model works, as a way of measuring how many times your model predicts correctly. One major drawback of this metric is that it doesn’t account for uneven data sets. This means that if your dataset has one class far more common than another, then no matter what you do, your model will likely get good results simply because it will predict the most common class most of the time.

Also, while it is simple to understand, accuracy is also limited on its own, as it does not give you insight into False Positives and False Negatives. The importance of these two can depend greatly on the application. An example would be Medical Diagnostics. A False Negative could have greater costs than a High Accuracy Rate. So, use accuracy alongside other metrics to fully evaluate your model.

Precision

Precision is the ratio of true positive predictive outcomes (optimistic) over all positive predictive outcomes. Precision is important in fields with significant costs associated with false positives. Spam filtering and medical diagnosis are examples of areas in which high precision is important due to the high cost of false positives. In addition, a very high precision will result in a very low false-positive rate. Therefore, high precision is an extremely important value for those with models that make optimistic predictions when such predictions could be dangerous.

Fraud detection and security are two application areas where precision prevents wasted resources from false alarms. However, precision does not indicate the number of false negative predictive outcomes. For this reason, many use precision along with recall to gain a better understanding of their model’s overall performance.

#A Comprehensive Guide to AI Model Types

Recall (Sensitivity)

Recall (sensitivity) refers to how many of the positive instances predicted by your model were actually positive. It’s important to track this, since missing a single positive instance could result in substantial cost (e.g., when trying to detect diseases). In other words, high recall means you’re capturing virtually all of the real-world positive instances, thereby minimizing false negatives.

For example, in an application that needs to send emergency notifications (e.g., for disasters), if there is a chance you’ll miss an event (i.e., a false negative), it would be very costly. Thus, a good level of recall is needed to prevent you from missing events. However, placing too much emphasis on recall will inevitably lead to more false positives (i.e., you’ll predict some things that aren’t positive), so there needs to be a balance between recall and precision.

F1 Score

The F1 Score combines the Precision and Recall with equal weight (the Harmonic Mean) so that Precision and Recall are equally weighted. Therefore, it is useful when the data set is skewed towards one class or the other, as the F1 Score takes into account False Positives and False Negatives. This allows you to use the F1 score as an evaluation tool for how your model balances precision and recall.

The F1 Score can help set your model threshold(s) based on your needs/requirements to adjust precision/recall. As both FP & FN have significant cost implications in many applications, it offers greater insight into how well your model performs.

ROC-AUC

The Receiver Operating Characteristics (ROC) curve plots the True Positive Rate (TPR) against the False Positive Rate (FPR) across all possible thresholds of a classifier.

A measure of how well the classifier can distinguish between the two classes is the Area Under the Curve (AUC). This area should be high if it is reasonable to expect that the classifier can correctly classify both classes with high accuracy across different thresholds.

In binary classification, ROC-AUC has many applications.

It allows you to assess your classifier’s ability to balance Sensitivity and Specificity, so you can compare classifiers based on their ability to detect a class, even when they use different threshold values. Therefore, ROC-AUC provides an overview of your classifier’s performance.

Mean Absolute Error (MAE)

The mean absolute error (MAE) in regression models calculates the average magnitude of all errors in a given set of predictions, disregarding their sign. This makes it easier to understand how accurate your model is at predicting.

The advantages of using MAE include its ease of use, its ability to provide a simple representation of error magnitude in the same units as the original data, which allows for better interpretation, and its robustness to outliers. Disadvantages of MAE include the fact that it does not necessarily indicate the severity of large errors relative to small ones. Therefore, MAE is typically combined with another measure of model performance, such as Mean Squared Error (MSE), for a more complete picture of how well a model performs.

Mean Squared Error (MSE)

MSE is an alternative measure that can be used with other regression models. MSE calculates the average of the squared differences (errors) between the actual and predicted values. MSE tends to place greater emphasis on the magnitude of the error; thus, if there are large discrepancies that would have negative impacts (i.e., adverse effects), then MSE may be useful. Squaring the errors places greater emphasis on predictions that were farthest from the actual values, providing a more sensitive assessment of the model’s predictive quality.

MSE may be especially useful in environments where errors can cause significant harm (e.g., financial modeling, autonomous vehicle navigation). Although MSE may also be adversely affected by extreme data points (outliers), it provides a useful way to assess whether your model will perform well under conditions where accuracy is critical.

Regression Metrics Comparison

| Metric | Description | When to Use | Key Insight |

|---|---|---|---|

| MAE | Avg. absolute error | General regression | Easy to interpret |

| MSE | Squared error | Penalize large errors | Sensitive to outliers |

| RMSE | Root of MSE | Real-world scale | More intuitive |

| R2 | Variance explained | Model fit quality | can be misleading |

R-Squared

The Coefficient of Determination (or R-squared) measures the relationship between the predicted values from your model and the observed data values. Higher R-squared values indicate stronger predictive power of the model. The R-squared value also quantifies the proportion of variation explained by the model, which gives you an insight into the model’s ability to explain variations in the dependent variable.

While R-squared is easy to interpret and has been widely applied to many types of regression analyses, it should be viewed cautiously because it may mislead you in instances where you have overfitted your model or are comparing models that differ in their number of predictor variables.

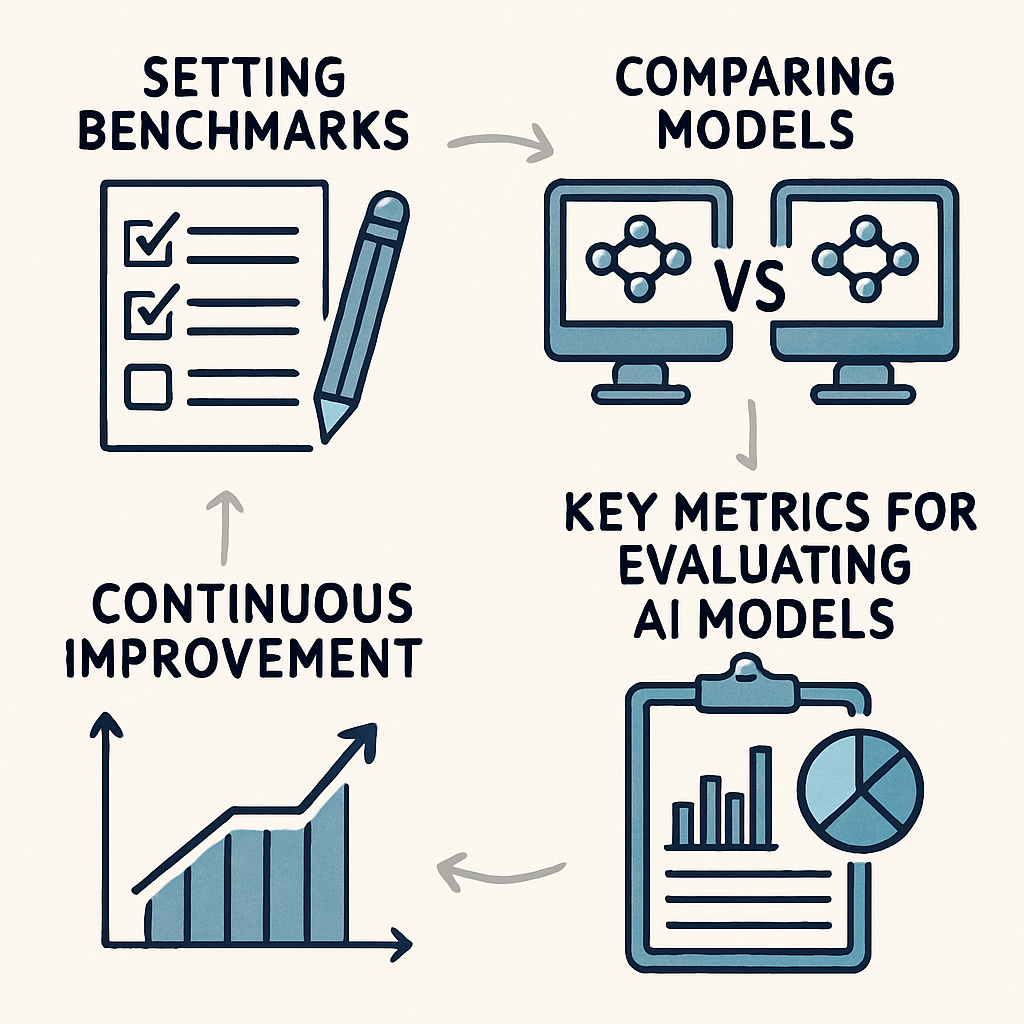

Performance Benchmarking

Performance benchmarking is the process of comparing your AI model to standard benchmarks or other models. Benchmarking enables you to evaluate where improvements are needed and to ensure your model remains competitive.

Creating benchmarks refers to establishing expected performance metrics for your model. Benchmarks may be based on industry standards, past models, or your company’s needs. By creating benchmarks, you can define how model development will align with your company’s strategy and provide a baseline for measuring the model. The need for benchmarks will evolve over time due to industry changes and/or the introduction of new information. As a result, you should continually update your benchmarks so that your model meets evolving expectations and standards.

#Understanding Sentiment Analysis: A Comprehensive Guide

Comparing Models

The purpose of comparative modeling is to compare the output of each model using an identical dataset. By doing so, you can evaluate each model on its own merits. The same set of criteria will be used to measure each model’s performance, enabling the selection of the best-performing model for your needs.

Comparing models through this process provides opportunities to understand both the strengths and weaknesses of each model and to provide direction for continued improvement and/or optimization. In a competitive marketplace, a model’s performance directly affects whether a company is successful.

Model Comparison Benchmark

| Model | Accuracy | Precision | Recall | F1 Score |

|---|---|---|---|---|

| Logistic regression | 85% | 80% | 75% | 77% |

| Random Forest | 92% | 88% | 90% | 89% |

| Neural Network | 94% | 91% | 93% | 92% |

Insight:

Benchmarking helps select the best-performing model based on multiple metrics, not just accuracy.

📌 Source:

Continuous Improvement

Benchmarking is an ongoing process rather than a single event to continue driving the development of your model. Through benchmarking as a regular ongoing process, you are able to evaluate each of your models against established standards (benchmarks) and against other models. As such, you will be able to identify areas for improvement. Through this ongoing evaluation and comparison process, the organization continues to foster innovation and helps ensure that all models are current with regard to available technology.

Through continued improvement via benchmarking, it creates a culture of excellence and encourages teams to continually evaluate and enhance/optimize their respective models. It not only improves the performance of your models but also contributes to overall organizational success.

Challenges in AI Model Evaluation

Although it is critical to evaluate artificial intelligence models, this task has been difficult in many ways. These include:

Imbalanced Datasets

An imbalance in data sets, that is, when one classification (class) has a much larger number of data points than all other classifications, will cause inaccuracies when using the evaluation metric of accuracy, for example. Precision, recall, and F-measure (F1) are better suited for use with imbalanced data sets. Careful attention needs to be given to how you sample your data, including oversampling the minority class and/or undersampling the majority class.

Synthetic Minority Oversampling Technique (SMOTE) is an advanced method for creating new examples from existing ones by generating additional examples of the minority class; it can help make a data set more balanced.

Real-World Example: Imbalanced Dataset Problem

| Example | Fraud Detection Model |

|---|---|

| Dataset: 98% (misleading) | |

| Recall for fraud: 20% (poor) | |

| Model accuracy: 98% (misleading) | |

| Insight | High accuracy dosen't mean a good model - recall and F1 score matter more |

Source:

Overfitting and Underfitting

Overfitting occurs when a model learns the training data too well (including noise), so it performs poorly on new data. Conversely, if the model is too simple to capture the relationships in the data, it will perform poorly (underfit). Cross-validation, early stopping, and regularization are techniques to avoid both types of problems.

Additionally, proper data cleaning (preprocessing) and selecting the right features for your model will help you avoid overfitting or underfitting. Properly setting the complexity of your model allows you to achieve a good trade-off between bias and variance, ensuring your model is robust.

Overfitting vs Underfitting

| Issue | Training Accracy | Test Accuracy Cause | Cause | Solution |

|---|---|---|---|---|

| Overfitting | High | Low | Model too complex | Regularization, dropout |

| Underfitting | Low | Low | Model too simple | increase complexity |

| Good Fit | Balanced | Balanced | Proper learning | Maintain tuning |

Source:

Selection of Appropriate Metrics

Selecting the appropriate metrics that will help you understand your specific problem is critical. Tasks are typically evaluated using different metrics. When evaluating a task, incorrect metrics could lead to an inaccurate conclusion about how well the model performed on that task. The first step in identifying the most effective metrics for evaluating an AI Application’s performance is to have a solid understanding of the Domain and Objectives of the Specific Task.

It would be beneficial to work with domain experts when creating a list of potential metrics and to align the selected metrics to achieve Business Goals. Most evaluations use multiple metrics to assess different aspects of model performance, providing a well-rounded assessment.

Conclusion

Evaluating an AI model is crucial during the development process as it determines whether the model performs well and meets its intended goals. Using the proper evaluation metrics (such as accuracy, precision, recall, etc.) enables a comprehensive assessment of how well a model performed. The use of performance benchmarks will help further evaluate how well a model was developed and ultimately improve how well it develops.

Using effective methods to evaluate AI models will enable organizations to unlock AI’s full potential, encourage innovation, and support informed decision-making. Continuous evaluation and optimization of AI models allows them to be flexible enough to adjust to changing environments and provide ongoing value by pushing the boundaries of technological capabilities.

Q&A

Question: Why isn’t accuracy alone a reliable metric for evaluating AI models?

Short answer: Accuracy can be misleading—especially with imbalanced datasets—because it doesn’t distinguish between false positives and false negatives. A model can appear “accurate” by predicting the majority class most of the time while missing critical minority cases. Complement accuracy with precision, recall, F1, and ROC-AUC (for classification) to capture error trade-offs and the model’s actual discriminative ability.

Question: When should I prioritize precision, recall, or the F1 score?

Short answer: Choose based on the cost of errors in your application. Use precision when false positives are costly (e.g., fraud alerts, medical positives that trigger interventions). Use recall when missing positives is expensive (e.g., disease detection, emergency alerts). Use F1 when you need a single score that balances both (e.g., when dealing with imbalanced datasets or when both error types matter).

Question: What does ROC-AUC measure, and how should I use it?

Short answer: ROC-AUC summarizes how well a binary classifier separates positive from negative classes across all decision thresholds. A higher AUC indicates better overall discrimination, regardless of the chosen threshold. It helps compare models fairly, after which you can tune thresholds to achieve the desired precision/recall trade-off for deployment.

Question: For regression, when should I use MAE, MSE, or R-squared?

Short answer: Use MAE for an intuitive, unit-consistent view of typical error that’s less sensitive to outliers. Use MSE when significant errors are especially undesirable because it penalizes them more heavily. Use R-squared to quantify how much variance in the target your model explains; interpret it cautiously, as it can appear strong even when a model overfits or when variables are added without improving generalization.

Question: How do I run a fair evaluation and benchmarking process?

Short answer: Split data into training, validation, and test sets (or use cross-validation), and compare models on the same datasets with consistent metrics. Set clear performance targets tied to business goals, then iterate: tune hyperparameters, adjust features/algorithms, and reevaluate. Address common pitfalls by handling class imbalance (e.g., oversampling, undersampling, SMOTE), monitoring overfitting/underfitting (regularization, early stopping), and updating benchmarks over time as data and objectives evolve

Question: What is appropriate to measure when doing regression analysis — R-squared, Mean Absolute Error (MAE), or Mean Squared Error (MSE)?

Short Answer: The MAE is ideal if you want a simple, non-unit consistent sense of what the average absolute error is, and it does not have extreme outlier sensitivity. If you want to severely punish significant errors, the MSE is a better choice. The R-squared is useful for understanding how well your model explains the variability in your target variable. But be cautious when using it, since it can also suggest that your model is good at explaining variability simply because it has too many variables (overfitting) or because it adds variables that don’t improve overall generalization.

Question: How would you conduct a fair benchmarking process to evaluate models?

Short Answer: Create a split for your data between a Training Set, Validation Set, and Test Set (or use Cross-Validation), and evaluate all models using the same data with the same metrics. Establish specific performance thresholds based on business outcomes, and, through iterative steps of Hyperparameter tuning, Feature/Algorithm modification, and Re-evaluating performance, refine your model(s). Be aware of common pitfalls such as class imbalance (i.e., oversampling, undersampling, SMOTE), Overfitting/Underfitting (Regularization, Early Stopping), and update your Benchmarks periodically as Data evolves and Objectives change.