Neural Networks are the most significant area in which Machine Learning and Artificial Intelligence are being utilized to revolutionize how people use technology. This practical guide provides a clear explanation of Neural Networks, allowing anyone interested in understanding the Basics and Applications to do so.

At their simplest, Neural Networks are computer-based systems designed to mimic the human brain. The Structure of these Systems is composed of Layers of Interconnected Nodes (often referred to as “neurons”) that Process Data by Identifying Patterns within it. Just like the human brain learns from experience, Neural Networks also learn to complete tasks by adjusting the connections between the neurons.

#Understanding Sentiment Analysis: A Comprehensive Guide

Summary

An Artificial Neural Network is a learning model based on how neural connections identify patterns and how they are layered.

The subsequent sections will provide an overview of the layers of an artificial neural network (the input, hidden, output layers), explain the training of an artificial neural network via “back-propagation”, give several examples of how artificial neural networks are used within different industries, discuss the benefits of using artificial neural networks (very accurate models), and briefly outline some of the problems or challenges associated with using artificial neural networks (requires very large amounts of computation time and may be prone to overfitting).

In addition, this guide provides beginners with the information needed to begin working with artificial neural networks, specifically lists two commonly used libraries for developing artificial neural networks (TensorFlow and PyTorch), and identifies several available online tutorial resources.

How Do Neural Networks Work?

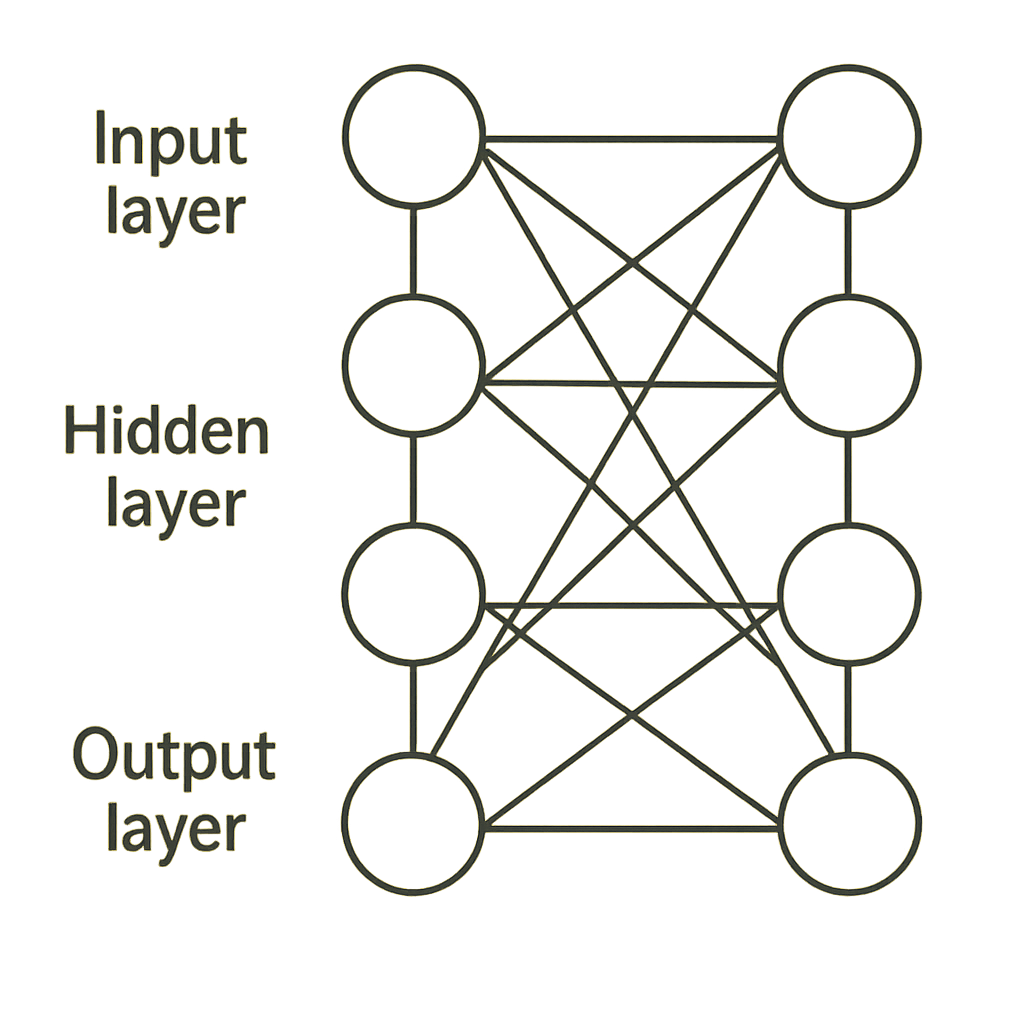

An input layer to a neural network accepts raw input data. Hidden layers(s) execute more complex operations than basic arithmetic (such as identifying patterns), and output layers show the results from these operations (i.e., in addition to classifying pictures, you can use an artificial neural network to make predictions about numbers).

What are Neural Networks?

Artificial Intelligence (AI) and Machine Learning (ML) both use Neural Networks based on how our minds evaluate and process information. Essentially, a Neural Network consists of several layers of connected nodes. Each node will apply a predefined set of mathematical algorithms to the input data. Then, this processed data will flow through multiple levels of the connected nodes.

As the data moves through the network, the nodes will begin communicating with one another and ultimately identify patterns in the training data. The ability of these networks to identify various patterns has enabled Neural Networks to be used in many applications, including image recognition, natural language processing, and decision-making.

The most common type of Neural Network contains three primary layers. These include the Input Layer, which receives all input data for the network. The Hidden Layers take the input data and, through calculations, produce useful features.

Finally, the Output Layer generates outputs, i.e., classifications and predictions, dependent upon what the network is trying to accomplish. By using large amounts of data, a network can learn to recognize complex patterns and produce accurate predictions. This has led to major advancements across numerous industries, including Healthcare, Finance, and Technology.

#How Chatbots Accurately Understand Human Language

Importance in Machine Learning and Artificial Intelligence

The significance of machine learning and artificial intelligence should not be underemphasized; both have a multitude of applications across all aspects of society (e.g., financial institutions, health care, transportation), implying that the future will bring numerous consequences from the implementation of these emerging technologies.

As such, machine learning enables computers to analyze large amounts of data, recognize patterns, and produce predictive outputs. These predictive outputs are then used to make informed decisions and optimize business processes across a wide variety of industries. Therefore, as technology continues to advance, it will become even more crucial for both organizations and individuals to understand how machine learning and artificial intelligence work so they can use them to create innovative products or services and increase productivity.

Training Neural Networks

Neural networks require adequate training to function properly. In the training stage of a neural network, the network is fed a large volume of input data and uses it to adjust the strengths of connections between neurons based on the error in the network’s output. A “back and forth” iterative process is utilized during this training stage. This process repeats until the network produces an accurate output.

Real-World Example: Neural Network in Action

| Example | Image Recognition |

|---|---|

| Input: Image pixels | |

| Neural network processes features through layers | |

| Output: Identifies objects (example: Cat, Dog) | |

| Result | High accuracy in computer vision tasks |

| Used in facial recognition and medical imaging |

Source:

The Structure of Neural Networks

Layers of a Neural Network

Most neural networks have multiple connected layers. Each layer works with the others to allow the network to take in data (input), make decisions about the data at various levels (processing), and generate an output based on the input. Generally speaking, there are three types of layers in a neural network: Input Layers, Hidden Layers, and Output Layers.

Input Layers receive data as input values into the neural network. These values can be numbers, images, or any other type of data needed for analysis. Input Layers are important because they initiate the processing of the data fed into the network.

Hidden Layers are located between the input and output layers. They process the information sent to them from the input layer. The number of hidden layers and the number of neurons per hidden layer will depend on the complexity of the task. Hidden layers enable the network to identify patterns and features in data, allowing it to classify data accurately.

The final layer is the output layer. The output layer generates results from the computations performed by the preceding layers. The output can be a classification label or a regression value. All three layers enable the network to learn from data and use that learning to make informed decisions.

Input Layer

The Input Layer is the starting point for Data processed by an Artificial Neural Network, so it’s the First Layer in terms of Neural Network Architecture. The Input Layer receives all of the Input Data (Images, Texts, Etc), which is Then Input into the Neural Network based on Its Design.

Each Node or Neuron in the Input Layer Corresponds to One Feature/Attribute of the Input Data. If the Dimensionality of the Size of the Input Dataset is large enough, Each Node/Neuron in the Input Layer will correspond to One Feature/Attribute of the Input Data. After the Correct Ordering of Data occurs in the Input Layer, the data passes through multiple Layers of Computation & Transformation before the proper Prediction/Classification is made.

Hidden Layers

Neural Networks contain “Hidden Layers” that preprocess the data before producing the final output. These “Hidden Layers” are called hidden layers because they represent computations that occur outside of both the input and output levels.

Using multiple layers of neurons with a variety of mathematical functions enables the hidden layers to recognize more complex relationships. Therefore, this enhances the network’s ability to learn these more complex ideas.

Most neural networks include at least one hidden layer; some models use multiple hidden layers. A larger number of hidden layers allows for a better understanding of the relationship present in your data. To illustrate this point, when using an Image Recognition application, it is common for the first hidden layer to capture simple edges, and each additional layer to capture increasingly complex shapes.

As such, defining the number of hidden layers in a model, as well as the number of neurons in each layer, is critical since too few layers limit what the model can learn; however, too many layers (and/or neurons) produce noise, ultimately creating a model that will be ineffective against new data sets.

In conclusion, Hidden Layers are a major contributor to the success of all neural networks. By stacking enough layers of neurons, Deep Learning Models can extract sophisticated feature representations from very large amounts of data, resulting in high performance across applications, including Natural Language Processing and Image Recognition. Designing Hidden Layers remains a primary area of research aimed at enhancing our capabilities in artificial intelligence and machine learning.

#A Comprehensive Guide to AI Model Types

Output Layer

The Output Layer is the last layer of a Neural Network. At this point, the final processing of the data from the previous layers is complete and ready to use to achieve the Neural Network’s goal.

The Output Layer takes data from previous layers and organizes it so it can be understood by a variety of applications (for example, classification and prediction). In most cases, there will be one neuron in the Output Layer for each of the desired outputs of the Neural Network.

For example, if you want your Neural Network to classify an image into either dogs, cats, bears, lions, tigers, wolves, elephants, monkeys, giraffes, leopards, jaguars, pumas, coyotes, bobcats, mountain lions, and zebras – then you would need 20 neurons in the Output Layer.

Also, the type of Activation Function used in the Output Layer depends on what task you are doing. If you are using the Neural Network to classify images into categories such as animals versus non-animals, you would use a Softmax activation function.

This ensures that when you get your probabilities back, they add up to 1 (100%), meaning one category was 99% and another was 1%. On the other hand, if you were using a Neural Network to predict how much money a customer will spend at a store, then you would use a Linear activation function. This allows you to get a continuous number as a prediction.

How Neurons Interconnect and Process Data

Neurons are cells within the nervous system that carry out specific functions, including the reception and relay of information. They interact via synapses — small spaces between the end of one neuron and the start of the next.

When one neuron sends a message to another, it will send a “signal” down the length of its axon until it reaches the end at the synapse.

At this point, it releases chemical signals (neurotransmitters) into the space called the synapse, crosses the synapse, and attaches to receptor sites on the receiving neuron. This results in the transfer of messages from one neuron to another, creating a network. Networks like those mentioned above enable the processing of large amounts of information through numerous connections.

Understanding how neurons connect to one another is important to understanding how information is processed in our brains. Each individual neuron can potentially connect to many other neurons (approximately thousands). As a result, there exist many complex networks in our brains, allowing us to feel sensations, think, and move. Furthermore, connections between neurons have varying strengths and efficiencies; they may also vary depending upon time (a process referred to as synaptic plasticity).

Synaptic plasticity plays an important role in how we learn and remember. The reason is that when new experiences occur, some neural pathways strengthen while others weaken. In conclusion, how neurons connect to one another is vital to our brains’ ability to process information and react appropriately to our surroundings.

Neural Network Components Table

| Component | Function | Example |

|---|---|---|

| Input Layer | Receives data | Image pixels |

| Hidden Layers | Process data | Feature extraction |

| Output Layer | Produces result | Classification |

| Weights | Adjust importance | Model tuning |

| Activation Function | Adds non-linearity | ReLU, Sigmoid |

Source:

The Learning Process

Understanding Training and Backpropagation

The act of “training” an ML model involves the model learning and adjusting its internal parameters to make accurate predictions. For this learning to occur, the model must be given a large amount of labeled data that includes both the input and the expected result.

The model will continue to learn from the patterns in the data it is fed and attempt to minimize errors by adjusting its parameter values. When adjusting the model’s parameters, backpropagation is employed. Gradients are calculated to represent how the model’s output varies with changes in its parameters.

Backpropagation has two stages, or passes: the forward pass and the backward pass. Through the forward pass, the model receives some input data and generates an output. The generated output is then compared with the true label for the input data using a loss function. After comparing the generated output to the true label with the aid of a loss function, the backward pass utilizes the chain rule of calculus to find the gradients of the loss relative to each parameter of the model.

These gradients can then be used to update the model’s parameters, using an optimization technique such as Stochastic Gradient Descent to reduce loss. The process described above, which attempts to reduce loss by updating the parameters, is repeated over many iterations, each called an epoch, until a satisfactory level of accuracy is reached.

The Role of Data in Training Neural Networks

- Training Neural Networks depends heavily upon data.

- Neural networks process an enormous amount of data to “learn”.

- The data can be in various formats (images, text, numbers).

- High-quality data will improve your models’ predictive and classification accuracy.

- The Data is split into three primary categories during training:

- The Training Set: Teaches the Network.

- The Validation Set: Assesses the Model’s Performance during Training.

- The Testing Set: Evaluates the Model’s Performance using Unseen Data after training.

- Selecting/Preparing these Datasets Properly is very Important.

- The Quality and Quantity of the Data are critical for successfully training a Neural Network.

Activation Functions Comparison Table

| Funtion | Formula Type | Use Case | Advantage |

|---|---|---|---|

| ReLU | max().x) | Deep learning | Fast computation |

| Sigmoid | 1/(1+e^-x) | Binary classification | Smooth output |

| Tanh | -1 to 1 | Hidden layers | Zero-centered |

| Softmax | Probability | Multi-class output | Interpretable |

Source:

Real-World Applications of Neural Networks

One of the advantages of neural networks is their versatility and applicability across many areas of study. Following is an example of this:

- Speech and Image recognition: Since neural networks can identify patterns, they are excellent at recognizing images and speech.

- Natural Language processing (NLP) is used in chatbots and translation software to both recognize and generate human language.

- Medical/Health Care: Neural Networks assist doctors in disease diagnosis by analyzing medical images and predicting patient outcomes.

- Stock Market/Fraud Detection: They help predict stock market trends and detect financial transaction fraud.

Image and Speech Recognition

image/speech recognition; image/object recognition; voice/speech recognition. Both types of sophisticated technology provide computers the ability to read what you see and hear your voice. The primary objective of image recognition is to determine what is in an image by identifying objects, events, and other elements. Image recognition employs various techniques to enable computers to “see” and interpret images, much like humans do.

Speech recognition converts spoken language into written text by recognizing speech (your voice), analyzing the audio signal(s) to recognize individual words/phrases, and thereby allowing computers to understand and respond to users’ verbal commands/instructions.

Both technologies have a number of applications, including providing a better end-user experience in virtual assistants/mobility and enhancing accessibility for individuals with physical limitations/disabilities. As both technologies continue to improve, they will become even more accurate, efficient, and adaptable to additional languages/dialects.

Natural Language Processing (NLP)

NLP (Natural Language Processing) is an area of study within computer science & Artificial Intelligence (AI) that focuses on developing the Human-Computer Interface through Natural Language. In general, the ultimate goal of NLP is to enable computers to understand human language and provide users with useful, meaningful answers.

Several examples of what can be done through NLP, as well as several obstacles/challenges, exist. Some of these areas include: understanding text, conversing with a user, analyzing the positive or negative sentiment expressed in a communication, and much more. As a result of its capabilities, NLP has been used in numerous applications (customer service, language translation, etc.) related to Data Analysis.

In addition, NLP researchers and developers use multiple methods and processes to apply complex algorithms/models to evaluate linguistic features (syntax, grammar, semantics) that define human language. This process uses Machine Learning (ML), a method that enables computers to learn from large datasets. Additionally, this process enables computers to continually improve their ability to understand and generate human language.

Examples of how NLP is being utilized today include voice-controlled personal assistants (i.e., Siri), chatbots, automated translators, etc. These technologies work to make interaction between humans and machines flow smoothly. In conclusion, NLP will remain a vital component in the ongoing development of intelligent systems that advance human-computer interfaces.

#Essential Key Metrics for Effectively Evaluating AI Models

Healthcare Applications

Healthcare applications are making a huge difference in how Doctors and other Medical Professionals can better care for their patients. Because they enable professionals to complete numerous daily responsibilities more easily, they also allow them to provide their patients with even higher-quality care.

There is a wide variety of healthcare applications available to both individuals and organizations. Mobile Apps that track an individual’s health are among the simplest, while others include large-scale systems that allow healthcare providers to store and manage a patient’s history and upcoming appointments. Using these application-based systems has significantly changed the relationship between Providers (Doctors) and their Patients and improved the overall health of those patients.

Alongside changing the relationship between Providers and their patients, Healthcare Applications have enabled Providers to effectively communicate with each other and with their patients. Many of these applications offer a safe way to message, schedule appointments, and share medical information in real time.

In addition to faster diagnosis and treatment plans, the instant sharing of medical information through mobile apps and web-based services is helping many patients access resources such as educational materials, personalized health tips, and reminders to proactively maintain their health.

Mobile and Web Applications have also provided healthcare providers with the tools necessary to gather and evaluate large volumes of health-related information. This enables them to establish patterns in disease/health conditions, improve patient care standards, and implement preventive measures.

Because they help meet the unique needs of individual patients while working to improve the overall structure of healthcare systems, these applications will remain essential to the timely, focused provision of patient-centered care as the healthcare environment evolves.

Financial Sector Applications

Technology has significantly changed how banks and other financial institutions conduct transactions and provide products and services to their customers. Additionally, it enables more efficient transaction completion, better risk assessment in lending, and improved overall customer service.

Examples of technological advancements in the financial services sector include mobile banking applications (e.g., Bank of America’s Mobile Banking App) that allow consumers to complete banking transactions on their mobile devices. The example above shows how technology can help businesses operate at an optimal level while creating additional avenues for providing products and services to individuals.

In addition to improving how businesses operate, financial institutions are using computers to develop increasingly sophisticated algorithms to analyze consumer spending patterns and identify investment opportunities based on market trends.

Neural Network Adoption & Impact Statistics

| Metric | Insight |

|---|---|

| AI market size (2024) | $500+ billion |

| Neural networks in AI apps | Majority usage |

| Accuracy improvement vs traditional ML | Significant increase |

| Industries using AI | Healthcare, Finance, retail |

| Annual AI growth rate | 30%+ |

Source:

Advantages of Neural Networks

High Accuracy in Predictions

Predictions are extremely accurate if the method used to make them accurately predicts what will happen. The way to achieve high levels of accuracy is through sophisticated algorithms that use large amounts of historical data to identify patterns and trends.

The increase in user confidence associated with high-accuracy predictive tools enables users to rely on the forecasts generated by the predictive tool(s) to support informed decision-making in multiple areas such as finance, healthcare, weather, etc. Since users must have confidence in their predictive tools, the ability of those tools to deliver highly accurate predictions enables users to develop more effective business plans, allocate resources more efficiently, and ultimately achieve better personal and professional outcomes.

Learning from Large Data Sets

Organizations today are reliant on analyzing large datasets, with data science and analytics dominating the business landscape. As much as an organization can collect information about its clients/customers, operational activities, and/or financial performance, there will be a growing need to develop reliable methods for analyzing the data.

The various analytical approaches used today to analyze large datasets (for example, to identify trends/patterns) provide companies with improved decision-making and increased operational efficiency across sectors such as finance, health care, and marketing.

While analyzing large datasets may involve many different techniques/algorithms, there is far more to the process than merely having the correct technique/method. Analyzing large datasets involves far more complex machine learning processes that enable computers to autonomously discover patterns in data and produce forecasts from them with little to no human input.

Companies that have successfully employed predictive analytics have been able to improve customer service/product offerings through utilizing machine learning. Companies that utilize Big Data technologies (Hadoop & Spark) can process/store very large quantities of data.

Large-scale data sets have several advantages for analysis, but they also present several disadvantages. A major disadvantage of large-scale data sets is ensuring the reliability of the data being used. The results of the analysis may not represent an organization’s actual data if the data analyzed is incomplete or contains errors.

The second issue with large-scale data sets concerns privacy and ethics. Many organizations within the financial and healthcare sectors maintain personally identifiable information (PII), which must be safeguarded against unauthorized disclosure. By establishing data governance processes and adhering to relevant regulations and laws, organizations can reduce potential risks associated with collecting, storing, analyzing, and using large amounts of data while generating valuable insights into their operations.

In general, analyzing large-scale data sets presents organizations with numerous opportunities for expansion and improvements. Organizational performance can be improved by leveraging large-scale data collection, developing new methodologies and analytical techniques to process this data, investing in the necessary technology to perform such analyses, and developing strategies to address issues such as data quality and PII privacy.

In the future, as the volume of organizational data continues to grow, so too will the importance of leveraging the knowledge embedded in these large collections.

Challenges and Limitations

Computational Requirements

Assessing what is required computationally (hardware and/or software) for an application/system means determining which physical hardware and operating systems are needed to enable the application/system to run efficiently. The two most obvious areas where this occurs are CPU/RAM and Storage. It is also important for an application to have an operating system compatible with it in order to achieve the desired level of functionality.

A thorough analysis of both the hardware and software aspects of how a computer will be used to access an application/system will result in the very best possible user experience.

Overfitting and Its Implications

- When a model fits the training data too closely, it picks up all the variations and noise in the data; this is called overfitting.

- A model can perform very well on the training data, yet fail miserably on new, unseen data.

- In many real-world situations, the data used will vary significantly from that used to train the model, leading to poor decisions based on inaccurate predictions.

- It is essential to address overfitting when building effective predictive models.

- Overfitting can have serious consequences in areas such as financial forecasting, medical diagnosis, and marketing.

- Using overfitting models can lead to poor decision-making by producing inaccurate predictions, such as recommending poor investments or misdiagnosing medical conditions.

- Methods to mitigate overfitting include cross-validation, regularization, and the use of a simpler model.

- Addressing overfitting helps develop predictive models that both accurately fit the training data and perform reliably in real-world applications.

Advantages vs Limitations Table

| Aspect | Advantage | Limitation |

|---|---|---|

| Accuracy | High performance | Needs large data |

| Automation | Learns patterns | Hard to interpret |

| Scalability | Handles big data | High compute cost |

| Flexibility | Works across domains | Overfitting risk |

Source:

Getting Started with Neural Networks

Recommended Tools and Frameworks

Choosing appropriate tools and frameworks in software development will allow you to increase your productivity and create a higher-quality end product.

There are many tools available to help with a variety of tasks throughout the development cycle, such as programming languages, libraries, and operating systems. There are also frameworks available that include predefined building blocks or best-practice methods to make development easier. Researching these tools before using them could potentially shorten your overall development time and aid in collaboration.

In addition to researching tools and frameworks, consider what your project aims to accomplish, who is working on the project (i.e., the skill set), and what has been implemented previously.

With an understanding of your goals, team members’ skill sets, and the technologies currently in use, developers can select the most effective tool(s) for your goals and use them effectively.

As technology advances rapidly, it is important to stay aware of new tools and frameworks being developed, as they can offer significantly enhanced features and greater efficiency than older alternatives.

Overall, taking the time to make informed decisions about the tools and frameworks used during the development cycle will greatly affect how well collaborative efforts among team members go. Additionally, through educated decision-making, developers will find ways to become more efficient and adaptable to changes.

Neural Network Workflow Table

| Step | Action | Outcome |

|---|---|---|

| Data Collection | Gather dataset | Raw input |

| Preprocessing | Clean/normalize | Better quality |

| Training | Train model | Learn patterns |

| Evaluation | Test accuracy | Measure performance |

| Optimization | Tune parameters | Improve results |

Conclusion: The Future of Neural Networks in Technological Advancements

Advances in Multiple Technologies are expected to come from Neural Network technology in the near future. Over time, the models will have to become much better and more sophisticated. Neural Network technology will positively influence a multitude of fields (Artificial Intelligence, Healthcare, Financial, and so on). As we continue to expand and grow the area of neural network technology, I believe there will be additional innovative applications that create positive changes in both Industry and our daily lives. By incorporating neural networks into these systems, I believe we can look forward to increased efficiency, improved accuracy, and new, transformative approaches to solving complex issues.